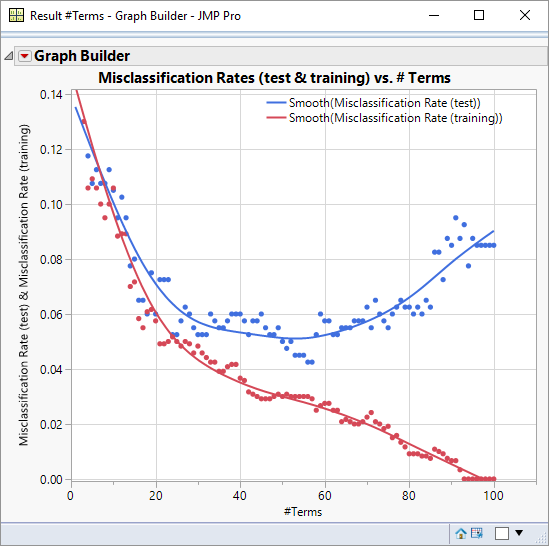

This is a misclassification rate of 0.25: a statistic that is reported in the Fit Details outline:Ĭonsidering that I am only using one pixel, I’m very happy with that. On balance 502 (119+383) of the 2000 data points were misclassified.

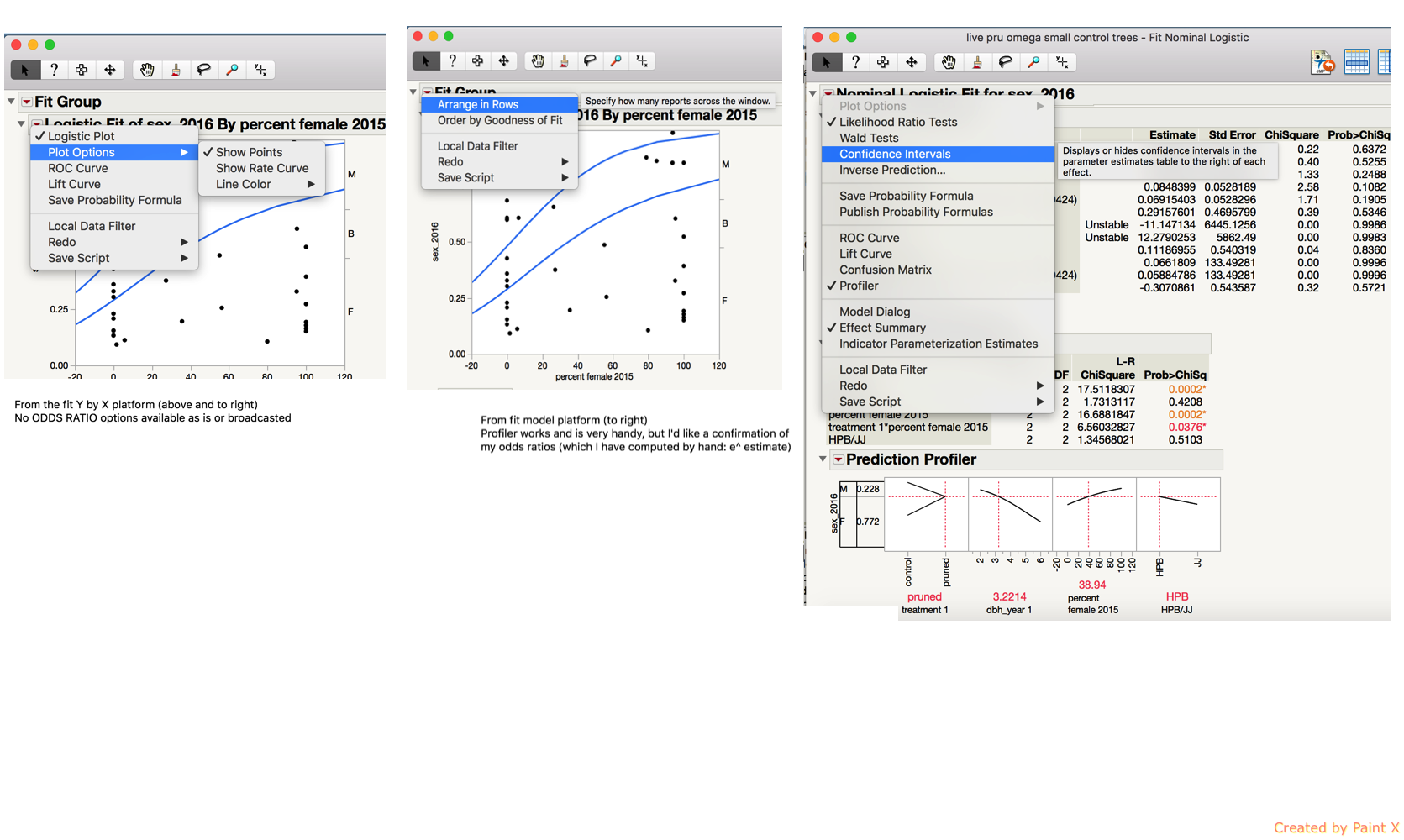

So what does the confusion matrix tell me? The model does a fairly good job of classifying the sixes correctly and gives me a better than evens chance of predicting a five. Using the model I would like my chance of success to be “lifted”. So roughly speaking if I were to make a random guess of the value of a digit I would have a 50:50 chance of being correct. The output from a logistic model can look intimidating – so I am going to focus on just one piece of information – the confusion matrix: The logistic model will evaluate that balance of probabilities. I’ve highlighted the pixel as the red point below:īut on the balance of probabilities it looks like a reasonable selection. I’m going to use column 216 – that corresponds to a pixel that i believe should be “on” for a five, and “off” for six. For my predictor variables I can use one or more of the Pixel Data columns. When you use the Fit Model platform with a response variable that has a nominal modelling type JMP automatically selects the logistic personality. The new columns have been placed in a column group Pixel Data: Ndt << Group Columns( "Pixel Data", cols) Here is a script to do the hard work of creating these columns: I know that the images are 28×28, so that means that there will be 784 columns of pixel data. So for each row of the table, I want to create a column for each pixel in the image. My response variable is clear: it is my digit column that contains the classification value 5 or 6 (see my last blog for further details).īut what about my X variables that will describe the features within the images? I’m going to start with the raw pixel data. Below is the same function but using a parameter value (beta) of 1000: Ideally, we identify parameters (beta) and features (X) that provide a clear distinction between the classes. The most probable class is therefore the one with a probability greater than 50% in this way the logistic function can be used to describe either a continuous probability outcome or a discrete binary outcome. This makes it particularly useful for representing probability responses.įor a binary classification problem such as the fives-and-sixes classification, the curve can be used to represent the probability (p) of one of the classes: the probability of the other class is simply 1-p. This function plots as an S-shaped (sigmoidal) curve:Ī useful characteristic of the curve is that whilst the input (X) variable may have an infinite range, the output (Y) is constrained to a range 0 to 1. The standard logistic function takes the following form: To understand logistic regression it is helpful to be familiar with a logistic function. When it is discrete the equivalent modelling technique is logistic regression. This model-form is used when the response variable is continuous.

The most common form of regression is linear least-squares regression. I will also take the opportunity to look at the role of training and test datasets, and to highlight the distinction between testing and validation. In this post I’ll model the data using logistic regression. In a recent post I created a table that contained two classes of data: images that represent either the handwritten digit ‘5’ or the digit ‘6’.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed